About this article

In this article, I’ll explore the topic of recursion in Python.

This post is the first in a miniseries exploring recursion and algorithms. I based the series on my notes while studying for a Python technical interview.

For quick access, here is the list of all the posts on this series:

- Recursion in Python (this article)

- Sorting algorithms

- Searching algorithms

- The Dijkstra’s Algorithm

Introduction to recursion

In computer science, recursion is a method of solving a computational problem where the solution depends on solutions to smaller instances of the same problem. Recursion solves these problems by using functions that call themselves from within their code.

There are three ingredients in a recursive algorithm:

- One or more base cases for which the function produces the result trivially, without recursion.

- One or more recursive cases for which the function computes the result by calling itself.

- The inputs must change state on each recursive case, moving towards the base case.

Example of a recursive algorithm: Computing the nth Fibonacci number

In mathematics, the Fibonacci numbers form a sequence where each number is the sum of the two preceding ones.

By definition, the 0th Fibonacci number is 0, and the 1st Fibonacci number is 1. All subsequent numbers are the sum of the previous two numbers.

The sequence from the 0th to the 10th Fibonacci number is: 0, 1, 1, 2, 3, 5, 8, 13, 21, 34, 55.

Solving the Fibonacci sequence using iteration

The code below solves the Fibonacci sequence using iteration in Python:

Solving the Fibonacci sequence using recursion

The code below solves the Fibonacci sequence using recursion:

Note that the recursive solution is more readable and intuitive when compared to the iterative solution. Also, note the presence of the three ingredients of recursion:

- When n is 0 or 1, we compute the result without recursion (base case).

- We calculate the results recursively for all other inputs (recursive case) as the addition of the two previous numbers in the sequence (n – 1 and n – 2).

- We move towards the base case by subtracting from n in every recursive call.

Maximum recursion depth

There is a default limit to how many recursive calls your program can add to the call stack in Python. This limit is 1,000. If you reach this limit, your program will return the following error:

You can check the recursion depth limit and modify it with the following calls:

Improving runtime with memoization

If you examine our recursive solution to the Fibonacci sequence from before, you’ll notice that we make two recursive calls for each number in the series, and each of those calls makes an additional two calls. Consequently, we get an exponential time complexity of roughly O(2n).

With such a runtime, it takes a long time to compute the results, even for relatively small n.

I’ve added a time measurement to our previous code to verify the exponential increase in the runtime:

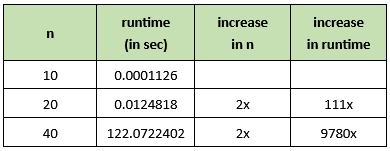

When I run this code on my computer, I get the following runtimes:

We should note that many of the recursive calls made by the algorithm are for previously computed Fibonacci numbers. We can improve the efficiency of our algorithm using a technique called memoization, which consists of caching the results of the recursive calls to avoid duplicated computations.

Below is our improved Fibonacci sequence recursive algorithm, enhanced by memoization:

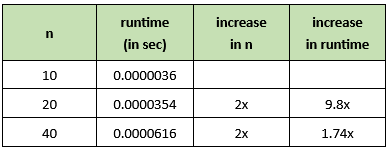

Notice the significant improvement in runtimes. This solution runs in linear time O(n):

Using the lru_cache decorator

The memoization pattern is so helpful and its use so common that it is part of the functools library. From functools, you can import the lru_cache function and use it to decorate your recursive function.

Lru stands for least recently used. Applying this decorator caches the last 128 results from the function and uses them instead of repeating the computation. You can pass the maxsize argument to the decorator if you need to modify the default 128 cache size. You can also pass maxsize=None to allow the cache to grow without bound.

Below is the Fibonacci sequence recursive algorithm using the lru_cache decorator instead of our memoization algorithm:

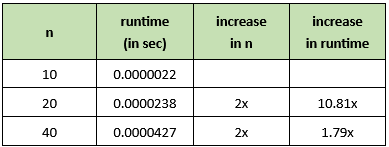

Notice the linear runtime O(n), similar to the runtime obtained from our memoization implementation:

Solutions to common recursion algorithm interview questions

Multiplication

Below is a recursive algorithm for multiplying two numbers, m and n. This solution has a linear time complexity O(n) for increases in the value of the second argument n.

Exponentiation

Below is a recursive algorithm for raising an integer, m, to the power of an integer, n. This solution has a linear time complexity O(n) for increases in the value of the exponent n.

Factorial

Below is a recursive algorithm for computing the factorial of an integer, n. This solution has a linear time complexity O(n) for increases in the value of the n.

Greatest common divisor

Below is a recursive algorithm for computing the greatest common divisor of two integers, a and b. This solution has a logarithmic time complexity O(log n), where n is the maximum value between the two integers a and b.

Sum of all values in a list

Below is a recursive algorithm for computing the sum of all elements in a list of numbers. This solution has a linear time complexity O(n), where n is the length of the list.

Length of a string

Below is a recursive algorithm for counting the number of characters in a string. This solution has a linear time complexity O(n), where n is the length of the string.

Quick sort

Below is a recursive algorithm for quicksorting all elements in a list. This solution has a log-linear time complexity O(n log n), where n is the length of the list.

Linked-list traversal

Below is a recursive algorithm for traversing a linked list. This solution has a linear time complexity O(n), where n is the length of the list.

Tree traversal

Below is a recursive algorithm for traversing a binary tree. This solution has a linear time complexity O(n), where n is the number of nodes in the tree.

Conclusion

In this post, we cover recursion principles and explore the implementation of several recursive algorithms in Python. We also explored how the use of memoization to improve the runtime of recursive algorithms.

Having recursion in your coding tool belt will help you find ways of breaking down complex computing problems into simpler, more manageable parts, which is an essential ability for every programmer and computer scientist.

In the next article, we’ll explore sorting algorithms. See you there!

References

Cracking the coding interview, Gayle Laakmann Madowell, 6th Edition, Chapter 8.

What Is the Maximum Recursion Depth in Python

Memoization: Make Recursive Algorithms Efficient

functools – Higher-order functions and operations on callable objects